Introduction

Risk management is a major part of banking operations. Just like any other business, banking faces a lot of risk. However owing to the magnitude of stakes held by the government, public, and businesses, the risk weighs higher in banking as compared to other industries.

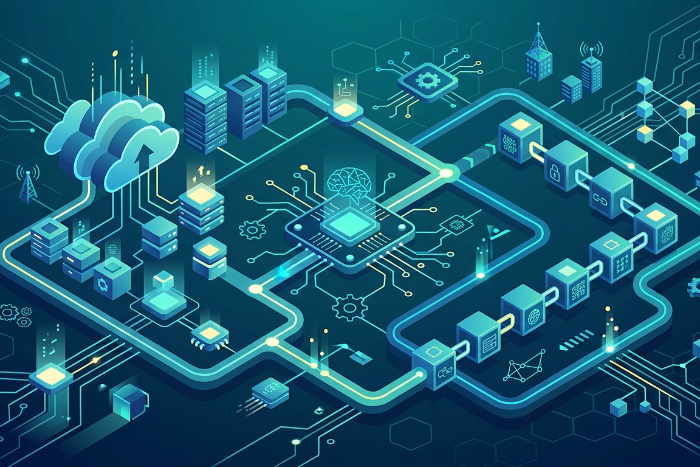

Earlier banking operations had limited offerings and a smaller relationship-based customer base, but growth in industrialization, trade, and regulatory oversight has made risk management crucial. On top of that, banks serve anywhere between thousands to millions of customers, and the volume of transactions generated by such a huge customer base is a challenge to analyze using traditional means. Introducing AI and ML in banking apps and services has led to a more customer-centric and technologically relevant sector.

Banks can implement Artificial Intelligence (AI) and Machine Learning (ML) technologies to analyze large volumes of data to analyze the risks and develop more robust strategies to manage them. In this blog, we’ll explore how AI and ML in banking enhance risk management, improve compliance, detect fraud, and boost efficiency, driving smarter, data-driven decisions.

How AI and Machine Learning can help banks manage risk?

Banks face a more diverse set of risks today owing to emerging technologies, growing customer demands, market volatility, and an increase in cyber threats.

Enhancing Credit Risk Assessment

- Credit risk is one of the most prominent risks banks face. Banks need to understand the risks associated with lending money to a business or an individual.

- Machine Learning models can go beyond traditional credit scores, analyze borrowers’ income and expense patterns, and current financial condition, and assess credit risk more accurately.

- ML models can also analyze large amounts of borrower data, economic factors, and historical defaults to create a complete profile for informed lending decisions.

AI and ML enable banks to analyze vast amounts of data for more accurate credit risk assessments. By evaluating patterns and predicting potential defaults, these technologies assist in making informed lending decisions. Continue reading AI and ML in Banking: How AI and Machine Learning Can Help Banks Manage Risk and Compliance? →